In the previous post, we quickly reverse-engineered an Orange LiveBox Play set-top box and how it can be easily driven by simple HTTP requests.

Be before implementing an interface between E.V.E and this kind of set-top box, we need to sit down and think a bit. Indeed, for now, E.V.E is nothing but a web browser using good old HTML5/JS technologies running on a Raspberry Pi hooked on a touchscreen. It’s quite easy to spawn AJAX requests from JavaScript, but since E.V.E will have to access externals resources (devices) via HTTP, she’s eventually will run into cross-domain troubles.

Unfortunately most consumer-electronic devices are closed and proprietary. And so is my set-top box. Thus, there is nothing I can do to add the proper CORS headers I would need. Since I’m dealing with devices on a local network, an external CORS proxy is no solution. JSONP technique might be a solution, but some devices don’t exchange JSON data.

What I need is a local middle man, that would act on E.V.E’s command, do a bit of work, and share things with here. This is were A.D.A.M comes to play.

A Dramatically Abrupt Multiplexer (A.D.A.M)

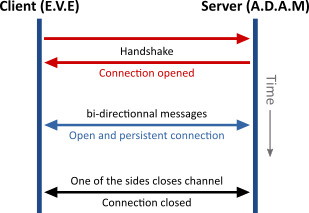

The simplest way to establish a bidirectional communication in a web browser is using a WebSocket.

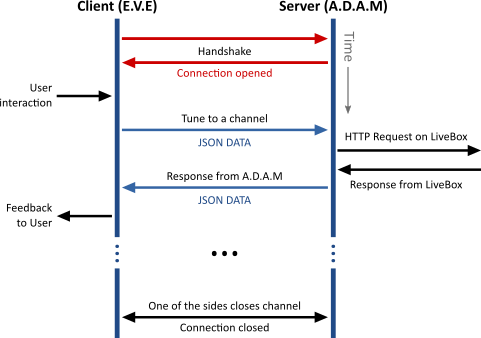

For our LiveBox TV functionality, a typical workflow would be:

Of course, A.D.A.M work would not be limited to spawn HTTP requests on command, it could also interact with the Raspberry Pi’s hardware, drive external devices via its GPIO interface, etc.

Typically, it could be used to record voice on E.V.E’s command, use a voice-to-text service (a locally running pocketsphinx or an external service such as Google translate or wit.ai), analyze the text (locally and / or via a cognition engine such as Wolfram Alpha), interact back with other devices if necessary and eventually give a feedback to the user.

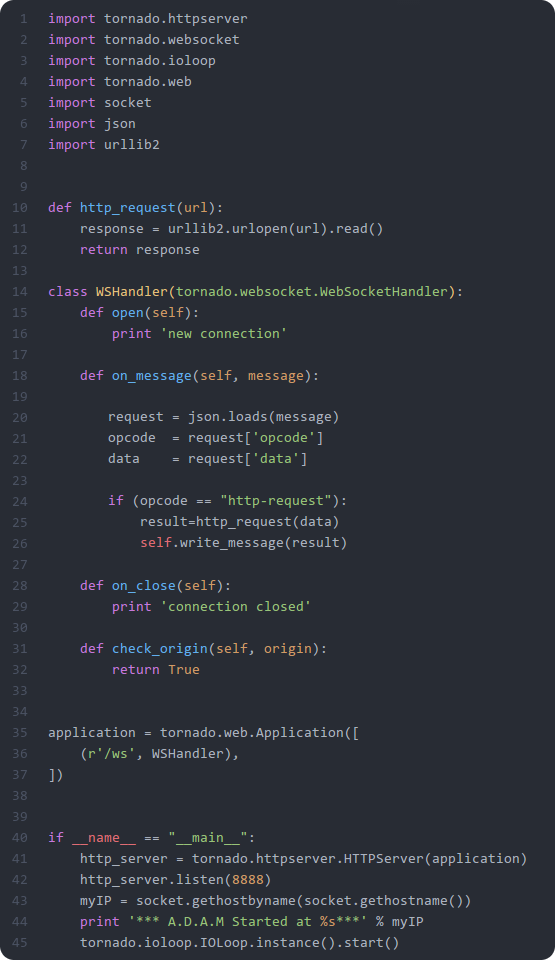

So I wrote down very first iteration of A.D.A.M. I chose to use python. Because of the obvious snake joke with E.V.E and A.D.AM. Because there is a lot of good python wrappers for Rasperry Pi (to drive the GPIO interface, or have fun with openCV, …). And because it allows to write down powerful code with little effort.

For the WebSocket machinery, I used the python framework for asynchronous networking Tornado. I took less than 50 lines of code to get something useful enough :

It’s rather crude, but it allows to manage the connection, react to JSON message from E.V.E (here, the only opcode understood is an HTTP request), push an HTTP request on demand and send an JSON message back to E.V.E.

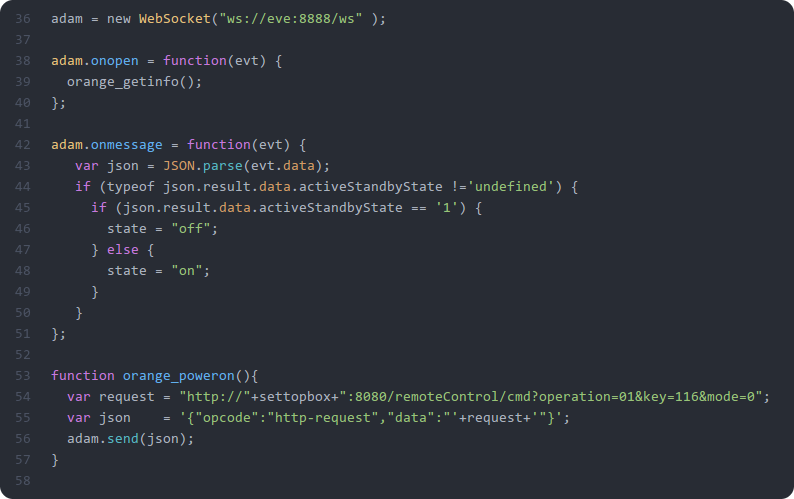

On E.V.E’s side, just a few lines of JavaScript were needed, to manage the connection, send a JSON message to A.D.A.M (an opcode and a data) and react to messages from A.D.A.M:

Of course, it’s a first draft code, it’ll need a bit of love to be in better shape (there is no error treatment whatsoever for now for example).

Interfacing E.V.E, A.D.A.M and a LiveBox Play

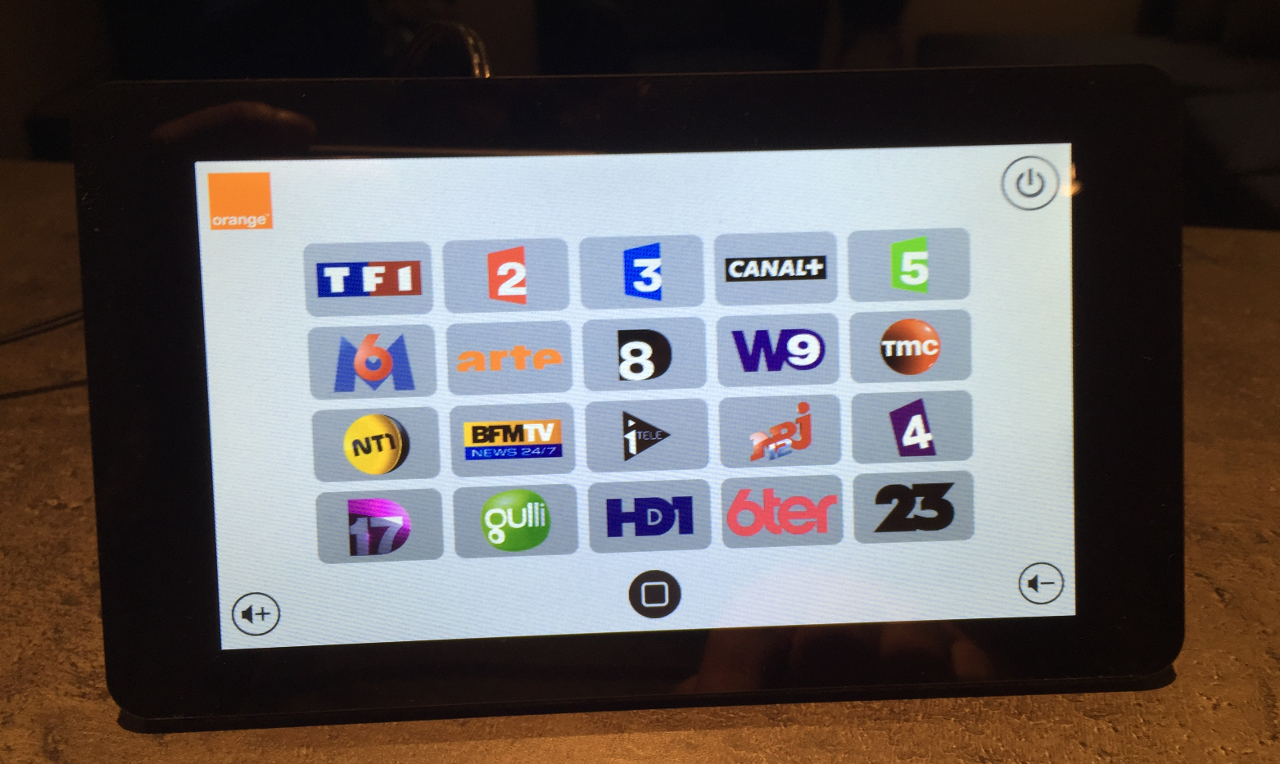

Just like the preceding code, E.V.E interface for TV will at first be very simple:

Within a few hours, this interface was implemented and functional on the Raspberry Pi:

And a bit late, it was hooked up with our reverse engineering work of last night and ready for a first test drive:

Niiice ! We now have a functional Raspberry Pi with a touch-enabled interface, a simple home dashboard with weather information, capable of exchanging with a general purpose middleware through a WebSocket, able to drive a set-top box and to play internet radio.

Since A.D.A.M is able to handle the local hardware, we have a architecture mature enough to experiment with voice interaction (and the new hardware I have received).