Quantum computing as made the headlines recently. At IBM’s inaugural Index Developer Conference held in San Francisco, the company showed off its latest prototype: a quantum computing rig housing 50 qubits, one of the most advanced machines currently in existence.

Google Quantum AI Lab announced Bristlecone, its new quantum processor, at the annual American Physical Society meeting in Los Angeles. This gate-based superconducting system is to provide a testbed for research into system error rates and scalability of our qubit technology, as well as applications in quantum simulation, optimization, and machine learning.

Microsoft released the first version of its quantum development kit and a new quantum computing programming language (Q#) last December. On february, the company released an update that adds support for quantum development on macOS and GNU/Linux. Both the Q# language, MS’s quantum simulator, will run on these platforms in addition to Windows.

It’s been almost 10 years (!) since I published a small post about quantum information … that is still lacking a follow up. And, unfortunately, there was only very little on that subject in the series of posts on hidden realities I published a couple of years ago :

- On hidden realities (part 1) – Many-worlds interpretation of quantum mechanics

- On hidden realities (part 2) – Space-time geometries and dynamics

- On hidden realities (part 3) – Higher dimensional universes

- On hidden realities (part 4) – Holographic principle

- On hidden realities (part 5) – Simulated reality

So, I think it’s about time to get (a little) deeper about the basis of quantum computing !

What is quantum computing ?

In simple terms, a quantum computer is any device that uses quantum mechanical phenomena, such as superposition or entanglement to perform calculations or manipulate data.

Whereas common computing requires the data to be encoded into binary digits (known as bits), each of which is always in one of two definite states (0 or 1), quantum computation uses quantum bits (known as qubits), which are superpositions of quantum states.

Quantum computing is still in its infancy. Experiments have been carried out in which operations are executed on a very small number of quantum bits (Google’s Bristlecone 72 qubits processor being the record as of 2018). Practical and theoretical research are carried on, in effort to develop quantum computers for civilian, business, trade, environmental and security purposes, such as cryptanalysis.

Quantum computing typically aims at solving certain classes of problems (like integer factorization) quicker than any classical computers or simulating multi-body quantum systems. Indeed, there exist quantum algorithms, such as Simon’s algorithm, that run faster than any possible probabilistic classical algorithm.

It is worth noting that a classical computer could in principle (with exponential resources) simulate a quantum algorithm, as quantum computation does not violate the Church–Turing thesis. On the other hand, quantum computers may be able to efficiently solve problems which are not practically feasible on classical computers.

Quantum computing is of great importance for cryptography and cryptanalysis. Typically, RSA public key encryption relies on the difficulty of factoring large integers. It is conjectured that factorization is impossible to do efficiently with a classical computer. Shor’s factoring algorithm for quantum computers would render this encryption method useless.

Quantum information

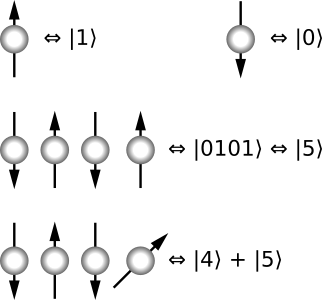

In quantum computing, a quantum bit (or qubit) is a unit of information. It is modeled as a common two-state quantum-mechanical system.

In a classical system, a bit would have to be either in one state or the other. In a quantum system, a qubit can be a superposition of both states at the same time, a property that is fundamental to quantum computing.

Let’s enter the realm of quantum mechanics. As we have seen, a quantum state can be mathematically described an element of a Hilbert vector space. Following Dirac’s notation, a quantum state (or ket) will denoted as .

Let’s introduce the following vectors and

.

A pure state qubit would be represented as a linear combination of and

:

where

and

are probability amplitudes.

Because the absolute squares of amplitudes and

are probabilities, it follows that the complex numbers

and

must be constrained by the equation

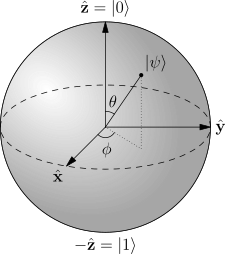

, which can be treated as the equation for a 3-sphere embedded in 4-dimensional space with a radius of 1, called a Bloch sphere:

Operators act on quantum states. More specifically, observables are self-adjoint hermitian operators.

Measurement is an operation in which information is gained about the state of the qubit. The result of the measurement of the pure quantum state will either be

or

, with respective probabilities

or

.

Classical computation

Now, let’s get back for a moment to non-quantum computing. Computation is done by means of an algorithm, which can be thought of as a set of instructions for solving a specific problem.

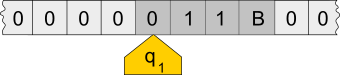

- A tape, divided into cells. Each cell contains a symbol from some finite alphabet. The tape is assumed to be arbitrarily extendable to the left and to the right.

- A head, that can read and write symbols on the tape and move the tape left and right one (and only one) cell at a time.

- A state register that stores the (finate) state of the machine.

- A (finite) table of instructions that, given the state (qi) the machine is currently in and the symbol (aj) it is reading on the tape (symbol currently under the head), tells the machine what to do: erase or write a symbol, move the head, …

The input is the initial state of the Turing machine.The output of a Turing machine is the final state of the Turing machine (if any). For a Turing machine it is impossible to know for all inputs whether the control head will reach a halting state or not. This is the known as the halting problem.

A Turing machine that is able to simulate any other Turing machine is called a universal Turing machine. A more mathematically oriented definition with a similar “universal” nature was introduced by Alonzo Church, whose work on lambda calculus intertwined with Turing’s in a formal theory of computation known as the Church–Turing thesis.

The thesis conjectures that any function whose values can be computed by an algorithm can be computed by a Turing machine, and therefore that if any real-world computer can simulate a Turing machine, it is Turing equivalent to a Turing machine.

These principles are considered to be the origin of the idea of a stored-program computer used by John von Neumann in 1946 for the “Electronic Computing Instrument” that now bears his name: the von Neumann architecture.

Logic gates

Logic gates is an idealized or physical device implementing a Boolean function. A logic gate performs a logical operation on one or more binary inputs and produces a binary output. In any computation, a circuit (made of logic gates) can be represented as mapping a n-bit input to a m-bit output.

The following table lists such logic gates:

| NOT | Negation | ||

| AND | Conjunction | ||

| OR | Disjunction | ||

| NAND | Alternative denial | ||

| NOR | Joint denial | ||

| XOR | Exclusive Or | ||

| XNOR | Biconditional |

These gates can be expressed as linear operators.

The logic gate “NOT” can trivially be represented as a 2 x 2 matrix:

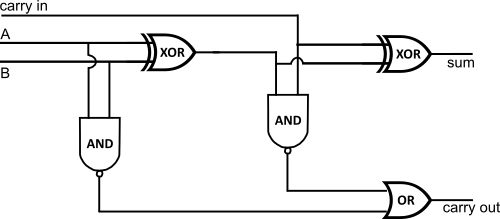

As an example, one can build a circuit of logic gates in order to process binary additions:

Quantum computation

How can it be extended to a 2-qubit system ?

First, let’s define two qubits:

and

Quantum entanglement

Quantum logic gates

A quantum logic gate can operate on a qubit. Mathematically speaking, the qubit undergoes a unitary transformation. Unitary transformations correspond to rotations of the qubit vector in the Bloch sphere.

- Being able to prepare system in a well defined initial state:

- Being able to manipulate the quantum state via unitary transformations:

- Be able to measure the final states of each qubit :

The most common quantum gates operate on spaces of one or two qubits, just like the common classical logic gates operate on one or two bits.

As matrices, quantum gates can be described by 2n x 2n sized unitary matrices, where n is the number of qubits the gate acts on.

The Hadamard gate is a 1-qubit rotation, mapping the qubit-basis states and

to two superposition states with equal weight of the computational basis states

and

.

It is commonly defined as:

,

so that gate operations are:

or, using and

as a representation basis:

One application of the Hadamard gate to either a 0 or 1 qubit will produce a quantum state that, if observed, will be a 0 or 1 with equal probability (as seen in the first two operations). This is exactly like flipping a fair coin in the standard probabilistic model of computation.

However, if the Hadamard gate is applied twice in succession (as is effectively being done in the last two operations), then the final state is always the same as the initial state. This would be like taking a fair coin that is showing heads, flipping it twice, and it always landing on heads after the second flip.

It is a crucial part of Grover’s algorithm and Shor’s algorithm in quantum computing.

There are many other quantum gates that can be defined, such as :

| Hadamar H gate | Definition (wikipedia) | |

| Pauli-X gate (NOT gate) | Definition (wikipedia) | |

| Square root of NOT gate | Definition (wikipedia) | |

| Swap (S) gate | Definition (wikipedia) | |

| Square root of Swap gate (√S) | Definition (wikipedia) | |

| Controlled gates (here, a C-NOT gate) | Definition (wikipedia) |

Note: this list is not exhaustive.

In quantum information theory, a quantum circuit is a model in which a computation is a sequence of quantum gates.

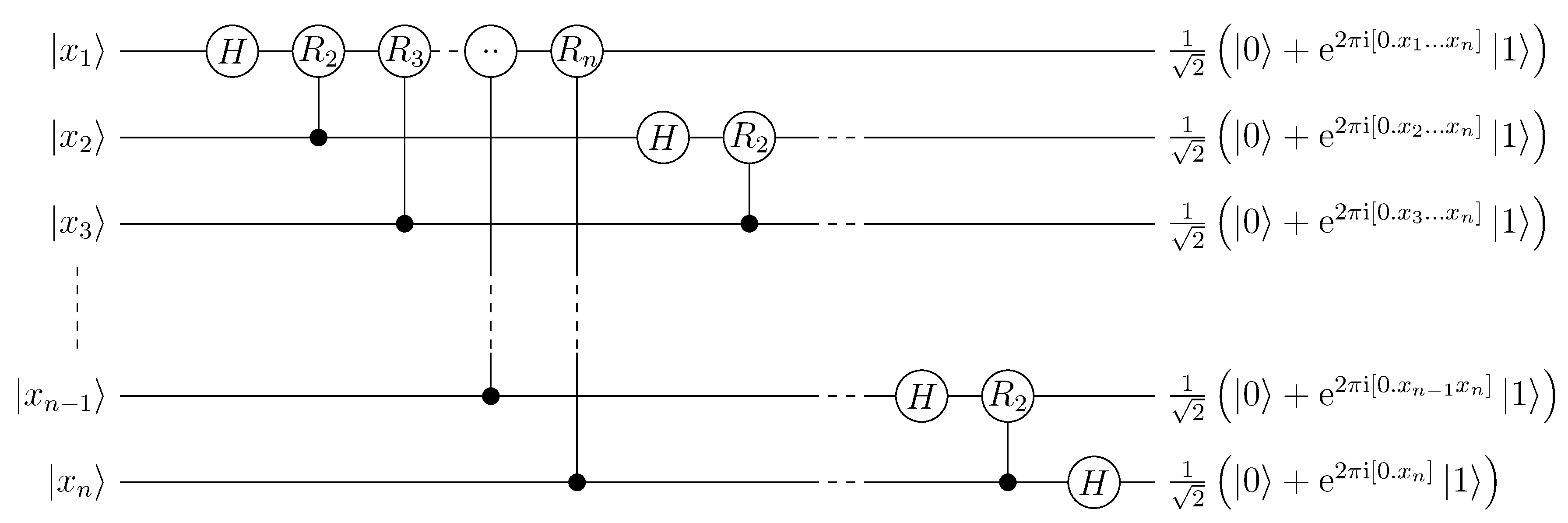

The following diagram is a typical quantum circuit, built on Hadamar gates and controlled gates, used for Quantum Fourier Transforms (QFT):

Such quantum logic gate can be implemented via quantum devices, such as C-NOT gates built using trapped ions (see this NIST paper for example).

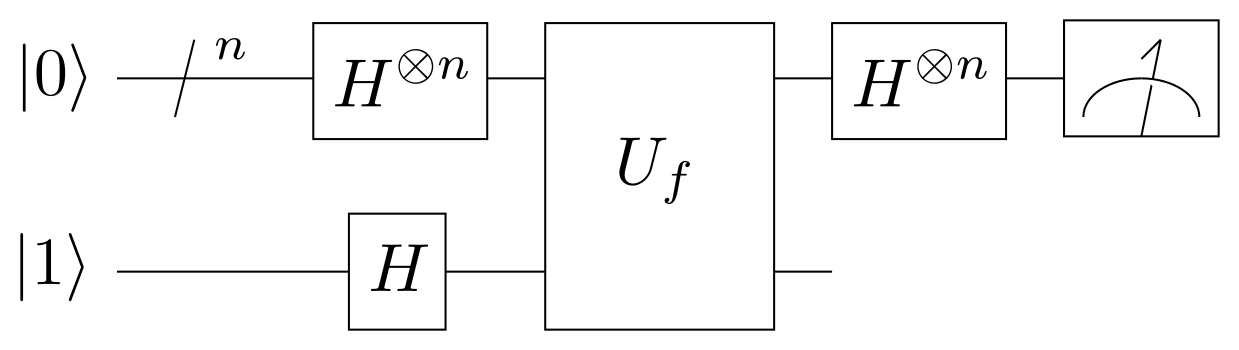

Deutsch(–Jozsa) algorithm

The Deutsch–Jozsa algorithm is a quantum algorithm, proposed by David Deutsch and Richard Jozsa in 1992 (and generalizing 1985’s work from David Deutsch).

It is specifically designed to be easy for a quantum algorithm and hard for any classical algorithm. The motivation is to show a black box problem that can be solved efficiently by a quantum computer with no error, whereas a deterministic classical computer would need exponentially many queries to the black box to solve the problem.

In the Deutsch-Jozsa problem, we are given a black box quantum computer known as an oracle that implements a function takes n-digit binary values as input and produces either a 0 or a 1 as output for each such value.

The task is to determine if is constant (0 on all outputs or 1 on all outputs) or balanced (returns 1 for half of the input domain and 0 for the other half) by using the oracle.

For the Deutsch–Jozsa algorithm to work, the oracle computing from

has to be a quantum oracle which doesn’t decohere

. It also mustn’t leave any copy of

lying around at the end of the oracle call.

The following diagram illustrates Deutsch-Jozsa algorithm’s quantum circuit, using Hadamar quantum gates:

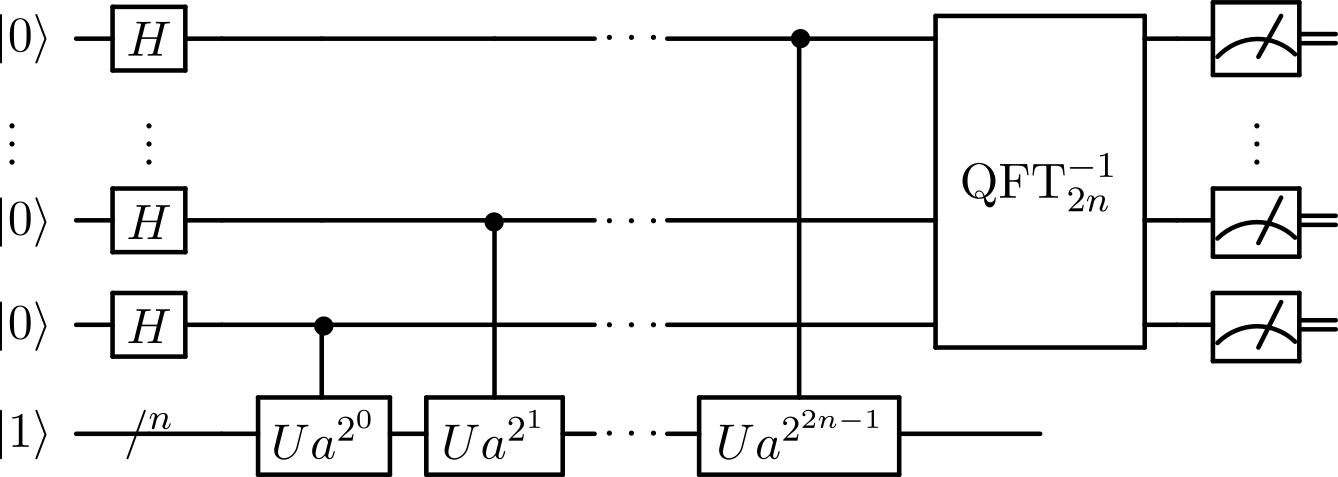

Shor’s Algorithm

Shor’s algorithm, named after mathematician Peter Shor, is a quantum algorithm formulated in 1994. Unlike Deutsch’s, which is of great historical importance but without practical applications, Shor’s algorithm solves a practical problem: given an integer N, find its prime factors.

The following diagram illustrates the quantum subroutine of Shor’s algorithm:

It is based on Hadamar gates and QFT’s. On a quantum computer, to factor an integer N, Shor’s algorithm runs in polynomial time. This is substantially faster than the most efficient known classical factoring algorithm (the general number field sieve), which works in sub-exponential time.

If a quantum computer with a sufficient number of qubits could operate without succumbing to quantum noise and quantum decoherence phenomena, Shor’s algorithm could be used to break public-key cryptography schemes (such as the widely used RSA scheme).

RSA is based on the assumption that factoring large numbers is computationally intractable. So far as is known, this assumption is valid for classical (i.e. non-quantum) computers. No classical algorithm is known that can factor in polynomial time.

However, Shor’s algorithm shows that factoring is efficient on an ideal quantum computer, so it may be feasible to defeat RSA by constructing a large quantum computer. It was also a powerful motivator for the design and construction of quantum computers and for the study of new quantum computer algorithms.

It has also facilitated research on new cryptosystems that are secure from quantum computers, collectively called post-quantum cryptography.

Quantum supremacy

Based on ideas from Richard Feynman (and other’s), renown physisit John Preskill popularized the term “quantum supremacy” to refer to the hypothetical speedup advantage that a quantum computer would have over a classical computer in a given field.

And actually, Shor’s algorithm for factoring integers provides a superpolynomial speedup over the best known classical algorithm.

However, practically speaking, quantum computers are much more susceptible to errors than classical computers due to decoherence and to quantum noise. It is not known (yet) how the resources needed for quantum error correction will scale with the number of qubits.

One of the greatest challenges is controlling or removing quantum decoherence. This means isolating the system from its environment as interactions with the external world cause the system to decohere. However, other sources of decoherence also exist. Examples include the quantum gates, and the lattice vibrations and background thermonuclear spin of the physical system used to implement the qubits.

Decoherence is irreversible, as it is effectively non-unitary, and is usually something that should be highly controlled, if not avoided. Currently, some quantum computers require their qubits to be cooled to 20 millikelvins in order to prevent significant decoherence.

It was also pointed out that a 400-qubit computer would even come into conflict with the cosmological information bound implied by the holographic principle (which could be a property of quantum gravity).

Like the “AI singularity”, “Quantum supremacy” is not that well defined: reaching “supremacy” is always linked to a given field.

This is actually not a surprise since we are dealing with subjects on the fringe of our knowledge. Since the term is often misused for clickbait articles, I refrain myself as much as possible from using this term: quantum computational advantage seems (for me) better suited and more objective.

Sometimes, it is extremely hard to even prove that a given device has achieved quantum speedup.

But it might be practically reached on particular subjects, such as cryptography, namely because of the effectiveness of Shor’s algorithm (on ideal quantum computers) and because it seems were are close to reach availability of devices able to manage large qubits registers.

On this particular field, risks are important enough for security and privacy to strongly promote and evaluate post-quantum cryptography (like lattice-based cryptography).

Quantum information science

As a conclusion, let’s enlarge our vision. Quantum information, as a science, is an area of study based on the idea that information science depends on quantum effects in physics. It includes theoretical issues in computational models as well as more experimental topics in quantum physics (including what can and cannot be done with quantum information).

It is a large field of research, which of course includes quantum computing, but also include

- Quantum error correction

- Quantum information theory

- Quantum complexity theory

- Quantum cryptography and its generalization to quantum communication

- Quantum communication complexity

- Quantum entanglement, as seen from an information-theoretic point of view

- Quantum dense coding

- Quantum teleportation

- Quantum simulation

- …

Hopefully, I’ll get some times (soon ?) to write a few notes on these tremendously interesting subjects 😉

Note: to speedup the writing of this long long long overdue post, a few paragraphs and illustrations are based on wikipedia’s entries on quantum computing, which are well written, though sometimes a bit technical for an introduction post.